The world which we live in is changing rapidly, especially after getting hit by the COVID-19 pandemic. The availability of large amounts of information in these times of uncertainty is one of the greatest gifts of our time. But, as more and more amount of diverse data becomes available, it gets difficult for enterprises to manage this ever-growing amount of information and derive value from it.

However, investing in a set of advanced cloud technologies and data analytics services empowers businesses to extract more value from data. So, to leverage the current crisis as an opportunity, modern enterprises need to embrace effective tools, technologies and novel approaches to data analytics.

In a world of big data, Microsoft Azure data integration services are helping businesses to manage and optimize their data in the cloud. Azure Data Factory is one such data service that allows enterprises to transform their raw data into meaningful insights with a “lift-optimize-shift” strategy.

What is Azure Data Factory?

Data Factory falls under the identity domain of services in the Azure catalog. Azure Data Factory (ADF) is a Microsoft’s cloud-based data integration and migration service. It stores an umpteen amount of data, orchestrates it and automates the movement/transformation of data in the cloud seamlessly. Simply said, it is a service that enables you to move data between one service to another in a split-second.

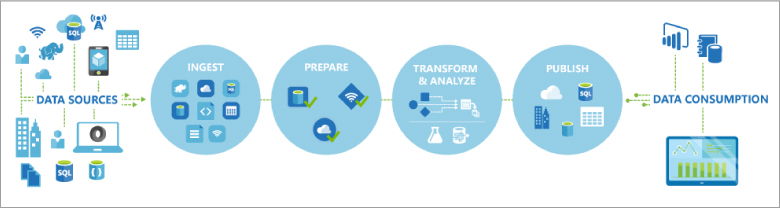

To get hold of the service, it is vital for you to know what it can actually do and what it empowers you to do. With Azure Data Factory service, you can:

Compile and connect: Congregate data from diverse sources and consolidate it. The data could be structured, semi-structured, or unstructured.

Centralize and store: Move and store a variety of data from on-premises storage systems to a centralized location like cloud-based stores.

Transform and enhance: Process and transform/ the data using computing services like Azure HDInsight, Azure ML, Azure Data Lake Analytics, Hadoop, etc.

Publish: Publish the organized data to cloud stores like Azure Data Lake for BI apps.

Visualize and analyze: And finally, visualize the output data using third-party apps such as Tableau, Apache Spark, Power BI, Hadoop, etc. for further analysis.

Source: Microsoft

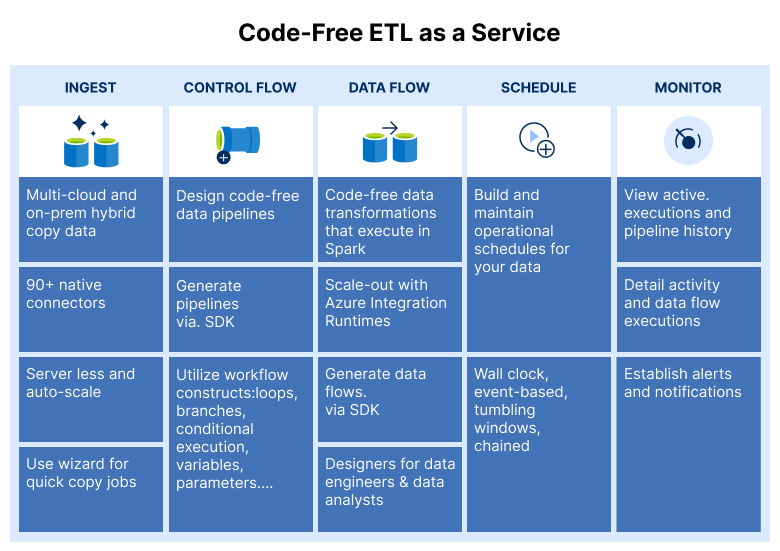

So the complete process of extracting, transforming and loading (ETL) data is performed by ADF. The service can not only help you to automatically migrate data to the cloud and encapsulate it in something called a pipeline, but also preserve your ETL/ELT processes in a low-code or no-code environment.

What is Azure Data Factory used for?

Every cloud project requires data migration activities across different data sources (like on-premises or the cloud), networks and services. Thus, Azure Data Factory acts as a necessary enabler for enterprises stepping into the world of cloud computing.

Consider an instance where you have to manage big data workflows in the cloud. And for that, you will have to deal with a variety of data that might be either stored in the cloud-based stores like Azure Blob Storage/Azure Data Lake Store or some on-premises storage system. Now you have some services to transform the data. But, the challenge is to find a way to automate the data movement to the cloud, process and store it in a few clicks for further analysis. That’s where Azure Data Factory comes in.

Source: Microsoft

ADF is essentially utilized for serverless data migration and transformation activities such as:

- Building code-free ETL/ELT processes in the cloud

- Building visual data transformation logics

- Staging data for transformation

- Running SSIS packages and moving them to the cloud

- Executing a pipeline from Azure logic apps

- Attaining continuous integration and delivery (CI/CD)

With Azure Data Factory version 2, there is even greater versatility and more operational usages for a potential customer as it has much more advanced functionality than version 1.

Why Azure Data Factory adoption is on the rise

As the world is moving into the cloud and big data; data integration and migration will remain essential elements for organizations across industries. ADF can help you address these two concerns efficiently by enabling you to focus on your data and allow you to schedule, monitor and manage your ETL/ELT pipelines with a single view.

Here are some reasons why the adoption of Azure Data Factory is on the rise:

- Drive more value

- Improve business process outcomes

- Reduce overhead expenses

- Better decision-making

- Increase business process agility

Besides, with ADF, you have to pay only for what you use. This serverless tool facilitates you to integrate data cost-effectively without the need for deploying any additional infrastructure.

Key features of Azure Data Factory

- Delta processing

- Monitoring and alerting

- Data movement security

- Scalability

- Control flow

- Parameterized pipelines

- Flexible scheduling

Turn your data into meaningful insights

Data analytics empowers modern businesses to unveil powerful data insights and uncover new business opportunities. Azure Data Factory offers an intuitive visual, no-code or low-code environment for businesses to accelerate their data integration journey. The time to adopt ADF is now. If you want to move, ingest, transform and process your data and make smarter business moves, get in touch with our team of experts.