With increasing intelligent devices, an ocean of data is now available. However, companies fail to learn from the data and underestimate the power of analysis to amplify business processes and ROI. Hence, companies must modernize their business with technological capabilities for gathering, storing and analyzing data to gain valuable insights. Using these data insights, they can address the challenges your organization faces and make opportunities to grow.

Nowadays, enterprises are trying to reap the benefits of data engineering and artificial intelligence (AI) in combination with sensible data, workflow automation, process optimization and intelligent business operations.

Introduction to AI in data engineering

Using artificial intelligence and data engineering, you can create future-proof data strategies and activate data to build a hybrid data engineering platform. Also, businesses can apply this technology to build, operate and deploy real-time data pipelines at scale, create flexible data models and amplify the value of data. If a business has inconsistent data, there are high chances for it to face data integration problems. So, enterprises must implement data integration and design to create consistent, comprehensive, and clean information from multiple systems for AI-enabled business analysis and decision making.

Looking for expert AI and data engineering services?

Let our adept AI consultants and data engineers help you build intelligent solutions. We ensure streamlined operations, enhanced decision making, and business growth.

How does AI and data engineering work?

The process of collecting, translating, and validating data for analysis is known as data engineering. AI data engineers build data warehouses to empower data-driven decisions. Data engineering lays the foundation for real-world data science applications and AI apply data models to create predictive and prescriptive analytics for better business outcomes.

1. Configure connections to data sources

2. Datastore setup for staging and process data storage

3. Retrieving data from heterogeneous sources

4. Storing a huge amount of data

5. Data quality and wrangling

6. Data processing to generate consistent data

7. Configuring and maintaining real-time data pipelines

8. Batch and real-time stream data processing

9. Apply several machine learning models iteratively

10. Generate the best machine learning model for the use case

Use cases of data engineering and AI

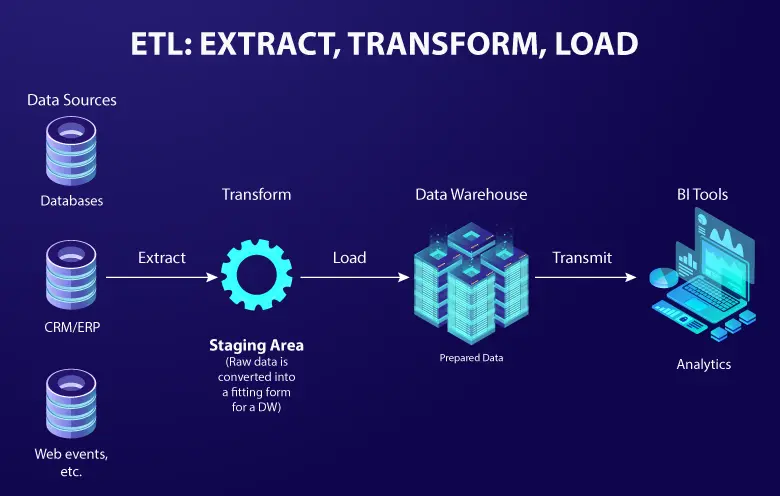

ETL / ELT

Just dumping, pulling and transforming makes data duplicative, erroneous and tough to manage. Using ELT tools, you can extract, load and transform data from multiple sources to a data warehouse or unified data repository.

Streamline data handling by creating (in a data stream) processing (unstructured data) and storing (structured data) to improve data management with AI. You can also automate data workflows using tools like Apache Airflow and train as well as manage different ML model solutions.

Change data capture

Change data capture (CDC) plays a major role in streaming data processing and pipelines. With the growing amount of data, the need for CDC techniques has become indispensable for managing data inflow for real-time analytics using AI and ML.

When data changes at the source, you need to act accordingly to modify a database, change the way you process it, or automate new responses. Using ELT to sync data stores does not eliminate it. And with stream processing, you can immediately recognize data changes and be ready to take action on new variables.

You can also reduce the bulk of the manual work from data capture, freeing your employees to focus on working with all that information. Using AI for capture does not need templates, exact definitions, taxonomies, keywords, or indexing. It extracts the correct information and automatically makes sense of different documents, irrespective of size, language, symbols, or format.

Smart caching

Most of the data stored in warehouses are buried deep in the cloud. So, to map into the data repository to retrieve a transaction history by putting query becomes time-consuming.

To do it better, recognize users and leverage machine learning (ML) to predict what those users can probably query. Then after, the selective pre-loaded data can serve you instantly by decreasing latency. The smart cache also leverages advanced data caching algorithms to boost content delivery at any location efficiently by improving user experience.

Stream analytics

With an AI-enabled data streaming infrastructure, you can build dashboards that give better business intelligence. Collect data from any source, layer on with AI business logic, then easily query to generate insightful reports. Also, you can fetch reports on-demand or on any schedule in real-time irrespective of when or how often data arrives.

Machine learning

Organizations have a huge volume of unstructured data such as posts, pictures, tweets, videos, audio files, satellite imagery, sensor data and more. They need data engineering solutions with machine learning services to transform data to identify behaviors and preferences of clients, prospects, competitors and others. So, empower your data scientists to self-serve streaming data using advanced ML algorithms.

With the help of data engineering consulting services, you can explore, build, test, deploy as well as monitor your ML models effortlessly. Managing data systems, connecting them to raw data streams, viewing their real-time model results will help you facilitate your data consumers appropriately.

Suggested: Why businesses need data engineering services

Leverage the benefits of data engineering and AI for technological innovation

Data engineering includes the data pre-processing by reducing the workload for data scientists and analysts in data processing and building infrastructure or databases. Using cloud and artificial intelligence, data scientists can train ML models and generate thoughtful insights from gathered data.

This synergy of exponential data engineering, cloud computing and ML model development is what modern businesses are looking to extract value from their data. For more information on how data engineering in AI can empower your business decision-making, you can talk to our experts.