LLM development services

LLMs comprehend, analyze, and generate natural language at scale. Our team helps you select and fine-tune the best-fit model to fit your specific business needs

Let's discuss your requirementOur Clients

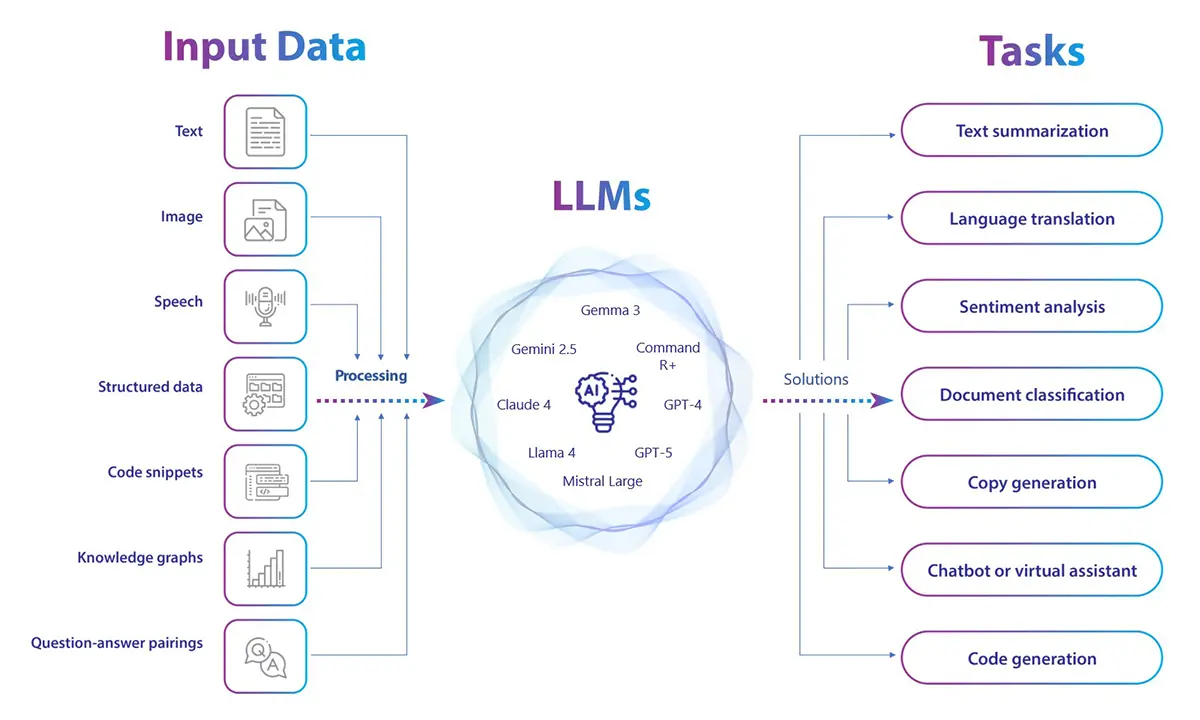

A Large Language Model (LLM) is an artificial intelligence model designed to process, understand, interpret, analyze, and generate human language. LLMs are trained on huge amount of text data to accomplish a range of tasks, including answering questions, summarizing, translating, or generating content. Our LLM consulting services enable companies to automate content, streamline processes, and unlock new efficiencies across teams and processes. From domain-specific model building to fine-tuning high-end LLMs such as GPT-4, GPT-5, LlaMA 3.1, and PaLM 2, we provide scaled AI systems customized for your requirements. We have developed and deployed 50+ LLM models in industries such as finance, healthcare, manufacturing, and retail. Our clients use them for content generation, legal research, intelligent search, customer support, and more. To explore what an LLM can do for your business, talk to our AI consultant.

We create concise strategies to build LLM solutions that meet your industry demands and business objectives. We start by obtaining a thorough understanding of your existing business process, functional gaps, and individual use cases. The clarity on these details allows us to craft the ideal development roadmap.

We create and optimize LLMs to suit your business requirements, from model selection and architecture design to data curation, training, and deployment. Whether you’re creating a light-weight assistant or a specialized solution, our LLM development services are designed to address problems and grow with your goals. Our experts create intelligent LLM solutions with functionalities such as sentiment analysis, natural language processing, speech recognition, etc.

Our experts simplify LLM integration with your current system for easy upscaling and smooth processes. We use cutting-edge methodology, recognize the risks, and provide end-to-end integration with your current systems for zero workflow interruption and downtime.

Our experts optimize the widely used LLMs like BERT and GPT in association with your organization. We fine-tune LLMs to help you achieve unmatched performance. The development approaches are designed to fit your business-specific needs and industry applications for the best possible performance and guaranteed industry compliance.

We train large language models on curated, real-time, and industry-specific data to make them familiar with your business context and adapt to evolving requirements. Our systematic training process fine-tunes model behavior, improves accuracy, and boosts contextual awareness, making your LLM smarter, faster, and more aligned with your goals.

We deploy LLMs into your current infrastructure seamlessly, with no disruption, while ensuring integration is smooth and efficient. Our iterative process prioritizes ongoing model testing, performance tracking, and upgrade, so your LLM solutions remain accurate, secure, and responsive to changing business requirements. On cloud or on-prem, we customize deployment for scale, velocity, and long-term impact.

We create LLM-driven apps that comprehend language, process data, and provide smart user experiences. From virtual assistants and chatbots to document analysis software, content generators, and knowledge management systems, our apps are engineered to automate processes, personalize experiences, and improve decision-making. With an in-depth understanding of your industry and users, we make each solution scalable, secure, and future-ready.

Our AI experts provide end-to-end model maintenance and support services. They administer performance, refine models according to changing data, and implement necessary updates to ensure your LLM-based applications keep functioning smoothly. We resolve problems related to breakdowns and model updates, providing high-quality outcomes for business expansion.

Our LLM team is skilled at prompt engineering that entails prompt design, optimization, and evaluation to produce desired answers and enhance the quality of LLM responses. We design optimized prompts to ensure maximum accuracy, efficiency, and context awareness in LLM based solutions.

We design and deploy Small Language Models (SLMs) tailored to your business needs. By using simplified yet powerful neural architectures, our SLMs train faster, run smoothly on limited-resource devices, and integrate seamlessly into your workflows. The result is cost-effective, accurate, and scalable AI that enhances customer support, powers chatbots, automates processes, and enables smarter decision-making at the edge.

Key benefits:

We develop custom NLP applications using the latest industry-standard frameworks, such as TensorFlow, NLTK, and spaCy. These tools help in extracting insights from various data sources like search queries, web content, business repositories, and audio for predictive analytics and intelligent data discovery.

Our team builds advanced machine learning solutions using extensive toolkits-such as Scikit-learn, Keras, PyTorch, TensorFlow, and Caffe-on supervised, unsupervised, and reinforcement learning paradigms. We develop robust and scalable ML solutions tailored for your business.

We use prompt engineering to improve the contextual understanding, reasoning capability, and problem-solving performance of LLMs by enabling models to adapt dynamically to a particular task with minimal retraining.

We build LLMs that seamlessly process and generate content in several formats such as text, images, audio, and video. Our experts deliver comprehensive AI solutions for complex, real-world applications.

We accelerate development cycles by adapting pre-trained large-scale models to your specific business domains and use cases, reducing time-to-market while maintaining high performance.

We embed fairness, explainability, and safety mechanisms throughout LLM pipelines, ensuring your AI solutions are trustworthy, compliant, and ethically sound. We continuously monitor and audit trails to detect and address potential biases.

Our LLMs require minimal or no training data for adapting to new tasks. This minimizes costs of data collection and accelerating scaling initiatives. This capability enables rapid deployment across multiple use cases without the lengthy data preparation cycles of traditional ML approaches.

We implement strong control layers through rule-based filters and verification pipelines to identify and mitigate any incorrect or fabricated output to ensure the accuracy and reliability of the response.

We integrate LLMs with external databases and vector stores to deliver real-time, verifiable responses grounded in authoritative data sources, ensuring accuracy and relevance. RAG also reduces hallucinations and keeps your systems updated with the latest information without requiring model retraining.

We create high-quality, privacy-compliant synthetic datasets to train and evaluate LLMs for sensitive domains such as healthcare and defense, overcoming the challenge of data scarcity while maintaining confidentiality.

Turn language into action with AI-driven insights, automation, and intelligent solutions.

Let’s connectWould you like to explore your industry, business specific case?

Enquire usThese models make legal work faster and more accurate with AI-powered support. Our solutions help legal teams handle documents with greater ease and fewer errors.

LLMs save time and increase creativity by assisting teams in writing, editing, and summarizing content in a broad variety of use cases. From creating reports to writing knowledge base articles, product documentation, training content, and marketing copy, LLMs make it faster, easier, and more uniform.

Stay ahead of the curve with real-time insights from customer feedback and market trends. LLMs help you make smarter decisions backed by data.

Turn scattered data into a smart knowledge hub. Our solutions help your teams find answers quickly and reduce time spent searching for information.

Improve your customer service with AI that handles routine questions and support tasks. LLM solutions handle general queries and give your team more time to focus on complex issues.

Break language barriers and connect with global audiences effortlessly. Our AI solutions make multilingual communication fast, accurate, and cost-effective.

Deliver smarter suggestions that truly match customer interests. Our AI-powered recommendations help increase engagement, satisfaction, and sales.

Understand how people feel about your brand in real time. With AI-driven sentiment analysis, you can stay ahead of public opinion and protect your reputation.

Our comprehensive model selection process involves a thorough evaluation of model size, performance, task compatibility, and resources. This approach ensures the selection of the most suitable foundation model.

Our LLMs are designed for adaptability. We employ APIs, prompt engineering, and fine-tuning to align proprietary or open-source LLMs with specific goals, ensuring their flexibility to meet your evolving needs.

We validate LLM performance using industry-standard benchmarks like GLUE, SuperGLUE, SQuAD, and StrategyQA, along with A/B testing for model comparison. This approach ensures that the model’s performance meets or exceeds industry standards.

Deploying and monitoring LLM-powered applications requires attention to LLM performance to mitigate risks. The ever-evolving nature of LLMs necessitates staying vigilant with the following considerations:

Words that motivate us to go above and beyond! A glimpse of our customers who make us shine among the rest.

Semiconductor

Our LLM solutions support semiconductor teams by structuring technical knowledge, simplifying documentation workflows, and improving collaboration across engineering and commercial functions.

Manufacturing

Our LLM solutions enable manufacturers to automate operations, minimize downtime, and make more informed decisions in the supply chain. From factory floors to maintenance, we introduce efficiency through smart automation.

Fintech and banking

We help financial institutions use LLMs to improve decision-making, customer service, and compliance. Our models support smarter transactions and safer operations.

Transport and logistics

Our LLM solutions make transport and logistics smarter, faster, and more efficient. By improving route planning, fleet tracking, and predictive maintenance, we reduce delays and operational costs.

Travel and hospitality

Our LLM-based assistants enable companies to provide smooth travel experiences. From reservations to customer support, we personalize and simplify travel.

Automotive

Our LLM-based assistants enable companies to provide smooth travel experiences. From reservations to customer support, we personalize and simplify travel.

Legal

Our LLM solutions transform legal operations by simplifying research, drafting, and compliance. Law firms and legal teams can save time while reducing errors.

Telecom

Our LLM solutions help telecom providers automate customer support workflows, summarize network incident reports, and streamline regulatory and operational documentation to improve responsiveness across service teams.

Supply chain

Our LLM solutions support supply chain teams by structuring operational knowledge, improving coordination, and simplifying documentation across logistics and planning functions.

Industry-specific LLMs are trained on specialized data of that industry, which helps them understand context better. This gives way to more accurate and contextually aware outputs, especially in industries that have special terminology or advanced concepts.

By automating routine tasks and providing more accurate insights, LLMs can enhance operational efficiency. This allows experts to focus more on intricate decision-making processes, which eventually saves time and resources.

LLM solutions reduce operation costs by automating duplicative tasks like customer support, content creation, and data analysis. They can be scaled across departments and applications, once the models are trained. These models allow businesses to scale AI without huge infrastructure or duplicated development.

Industry-specific LLMs enable organizations to provide more advanced, customized services that precisely meet their business needs. This is also a way of differentiating from other organizations utilizing general-purpose AI systems. It also leads to better customer experience and enhanced market share.

10+ years of experience in delivering AI solutions for manufacturing, healthcare, and finance industries

60+ AI professionals, including prompt engineers, fine-tuning experts, and multilingual model architects

Seamless integration with Azure OpenAI, AWS Bedrock, and Google Gemini for scalable, secure enterprise-grade deployments

Successful real-world implementations in chatbot orchestration, document automation, semantic search, and personalized recommendations

Proven frameworks for ROI-driven LLM adoption, ensuring measurable business outcomes across diverse enterprise use cases

LLM development means building and training large language models that can understand and generate human-like text. An LLM development company offers services like model training, fine-tuning, and integration for your specific needs. These are often part of broader LLM development services.

To develop an LLM, you need a large amount of data, the right algorithms, and strong computing power. Large language model development services help with the entire process, from data preparation to model training and testing.

Large language models learn patterns in language by training on huge amounts of text. They use this knowledge to predict and generate meaningful responses. Large language model consulting can help you understand how to apply them effectively to your business.

The primary function of a large language model is to process, understand, and generate natural language text. Businesses use LLM solutions to automate tasks like content creation, chatbots, and customer support.

To fine-tune a large language model, we start by selecting a pre-trained model and then train it further using your own, domain-specific data. This helps the model learn your business context, language patterns, and unique tasks. The process includes preparing the dataset, setting training parameters, and running multiple training cycles to adjust the model’s weights and improve its performance for your specific needs.

There are several LLMs available today, like GPT, Gemini, LlaMA, and Claude. We help you choose the right model for your project.

Yes, LLMs can be fine-tuned to handle domain-specific tasks like legal document review or medical analysis. LLM development solutions include this customization to match your industry needs.

Yes, ChatGPT is a type of large language model created by OpenAI. It’s an example of what LLM development services can produce, trained to understand and respond in natural language.

LLMs can automate customer service, generate content, extract insights from data, and much more. With the help of LLM consulting services, businesses can save time, reduce costs, and boost efficiency.

Yes, with the right LLM integration services, these models can be added to your current tools and systems smoothly. We ensure minimal disruption during the process.

Yes, we offer RAG as part of our LLM services. Whether it’s healthcare, finance, or legal, our LLM consultants build domain-specific solutions tailored to your data. Let us know what you’re tackling, and we’ll tailor an LLM solution for it.

Generative AI is a broad term for AI that can create content like text, images, or code. An LLM (Large Language Model) is a type of generative AI focused specifically on understanding and generating human language. All LLMs are generative AI, but not all generative AI models are LLMs. Our AI experts help businesses use these models effectively for language tasks.

Our LLM consulting services stand out due to our personalized approach, deep expertise in AI, and commitment to aligning models with your specific business goals. We ensure each model is tailored to optimize performance for your unique use cases.

We offer comprehensive services for custom LLM fine-tuning, including data curation, model training, prompt engineering, and task-specific adjustments. Our services enhance model performance and accuracy tailored to your business needs.

The timeline for fine-tuning an LLM varies depending on the complexity of the tasks and the amount of data involved. Typically, the process can take a few weeks to a few months to achieve optimal results.

Our comprehensive support during and after LLM implementation is designed to provide you with peace of mind. It includes regular updates, performance monitoring, and prompt resolution of any issues, ensuring your models remain efficient and effective.

We measure the success of an LLM implementation using performance metrics such as accuracy, speed, and resource utilization, along with validation benchmarks like precision, recall, and F1 score. Business impact assessments, including user feedback and productivity improvements, are crucial. Continuous monitoring and feedback loops help us ensure the models deliver desired outcomes.

Revolutionize your business with Softweb’s LLM consulting

With LLM solutions, there is no telling where your ideas will take you. Let’s spark the wave of transformation.