Take a moment to observe your surroundings. From the watch on your wrist to mobile devices, smart televisions, cameras and web browsers. Nearly every action we take is collected and preserved as data.

You might question the purpose behind the collection and storage of this data. In addition, you might question the extensive efforts brands and companies make to acquire it. The rationale behind gathering all this personal information is to make sense of it. This is ultimately uses to enhance companies’ products and services or deliver personalized experiences and product suggestions to their customers.

Data engineering refers to the process of extracting valuable insights by leveraging the power of data.

What is data engineering?

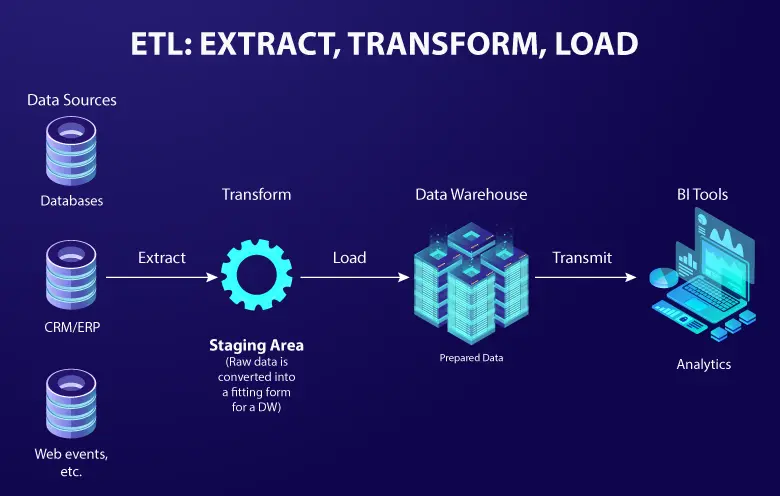

Data engineering involves the design and implementation of systems and processes to handle large volumes of data. A common example of data engineering is building a data pipeline to collect, transform and load data from various sources into a centralized database.

For instance, let’s say you want to analyze your customers’ behavior by combining data from their website, mobile app and social media platforms. A data engineer can design a pipeline that fetches data from each source, cleans and transforms it into a consistent format and loads it into a data warehouse.

Data engineers can use technologies such as Apache Kafka or AWS Kinesis for real-time data streaming, Apache Spark or Hadoop for data processing and transformation and databases like MySQL or MongoDB for storage. They can write scripts or use tools like Apache Airflow to schedule and automate the data pipeline.

Data integration tools play a crucial role in data engineering solutions by facilitating the seamless integration of diverse data sources into a unified system.

Enabling data-driven decision making

Data engineering processes contribute to the organization and structuring of data, simplifying the extraction of valuable insights and patterns. The role of data engineers is to create pipelines that generate data in a format that is readily available for analysis.

Here are some of the benefits of data engineering that help in decision-making:

- Centralizing data sources provides a more complete and accurate view of their data.

- Freeing up time and resources by streamlining data processing.

- Enhancing data quality through cleansing, normalization and standardization.

- Enabling advanced analytics allows businesses to identify patterns and trends in their data that would not be possible to see with traditional methods.

Scalability and performance

Data engineering lets you build a robust and scalable data infrastructure. Data engineers use distributed computing and cloud solutions to handle large data volumes, process them efficiently, and deliver timely results. They optimize data pipelines and use parallel processing techniques to enhance performance, minimize bottlenecks, and enable real-time analytics for faster insights.

Popular data engineering techniques that can help you achieve scalability and performance are:

- Data partitioning is the process of dividing large datasets into smaller, more manageable chunks.

- Data caching is the process of storing frequently accessed data in memory to reduce the amount of time it takes to access it.

- Data compression is the process of reducing the size of data without losing essential information.

- Parallel processing is the process of breaking down a task into smaller subtasks that can be processed simultaneously.

- Distributed computing is the process of using multiple computers to work together on a single task.

Data integration and quality

Data is often scattered across multiple sources in different formats and structures. Data engineers excel at using data integration tools to integrate and harmonize this diverse data landscape. They design and implement data integration processes to consolidate and transform data from various systems, databases, and APIs. This ensures consistency and coherence in the data. Furthermore, data engineers play a pivotal role in ensuring data quality. They implement data validation, cleansing and enrichment techniques. Leveraging data integration engineering services ensures accuracy, completeness, and reliability of the data, laying a solid foundation for downstream analytics and decision-making processes.

- Data collection includes collecting data from a variety of sources, including databases, files and sensors. This data is then stored in a centralized repository, where it can be easily accessed and integrated.

- Data cleaning involves removing errors, inconsistencies and missing values.

- Data transformation by converting it into a format that is suitable for analysis. This can involve changing the data type, format, or structure.

- Data validation by checking it against known values or rules to ensure tits consistency and accuracy.

- Data governance policies and procedures ensure the data is managed effectively and efficiently.

Data governance and compliance

Data engineers work closely with legal and compliance teams to ensure data privacy, security and regulatory compliance. They establish data access controls, encryption mechanisms and audit trails to safeguard sensitive information. By adhering to data governance best practices, role of data engineers is to build trust and confidence in the data ecosystem. This enables organizations to handle data responsibly and ethically.

Data engineering helps data governance and compliance by:

- Ensuring that data is collected, stored and managed in a consistent and reliable way.

- Protecting data from unauthorized access, use, or disclosure.

- Providing data lineage tracking to understand how data is used.

Infrastructure and technology expertise

Data engineering relies on a wide range of technologies, tools and platforms. Data engineers possess expertise in various programming languages, databases, distributed computing frameworks and data processing tools. They understand the trade-offs and complexities associated with different technologies, allowing them to select and implement the most suitable data engineering analytics and solutions for specific use cases. Their deep technical knowledge empowers them to design and optimize data infrastructure, ensuring scalability, reliability and performance.

Data engineers manage infrastructure and technology with their expertise in:

- Designing and developing data pipelines and infrastructure requires expertise in diverse data storage and processing technologies, along with the ability to create scalable and reliable solutions.

- Working with large datasets requires big data technologies to store, process and analyze large amounts of data.

- Troubleshooting and debugging data pipelines

- Monitoring and optimizing data pipelines

Drive growth with data engineering consulting services

Businesses should opt for data engineering services to effectively manage their data assets. This will enable efficient data integration, ensure scalability and performance, unlock advanced analytics capabilities and foster data-driven decision making. By embracing data engineering and modernization solutions, businesses can leverage the power of data and stay ahead in today’s data-driven landscape.

Data engineering as a service facilitates real-time processing, streamlines data transformation and enrichment and supports the integration of machine learning and AI technologies. With the right expertise, businesses can navigate the complexities of data engineering, derive meaningful insights and gain a competitive advantage in today’s data-driven world. We specialize in providing data-driven decision-making services that help businesses leverage data for informed and data-backed strategic decisions.